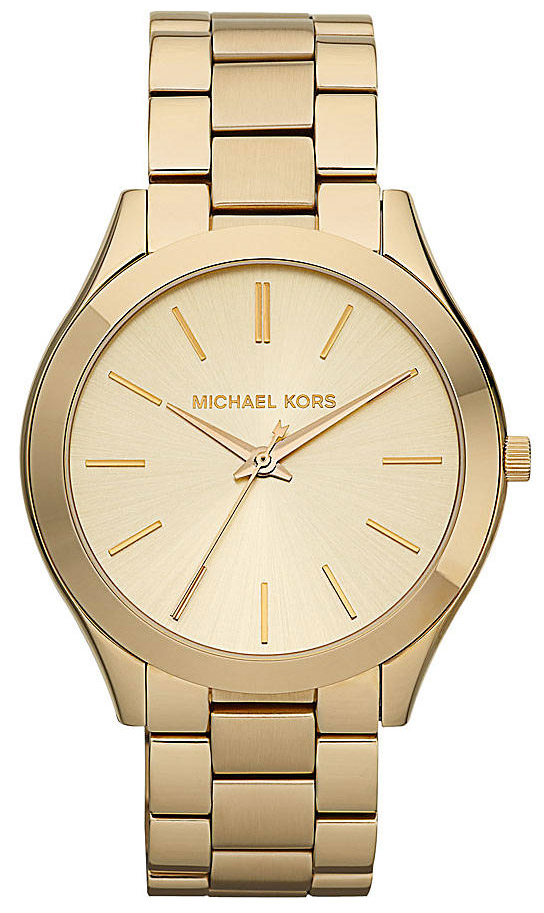

Dámske hodinky | Michael Kors MK3265 | Znackovyvypredaj.sk - Luxusné hodinky a kabelky so zľavou až 72%

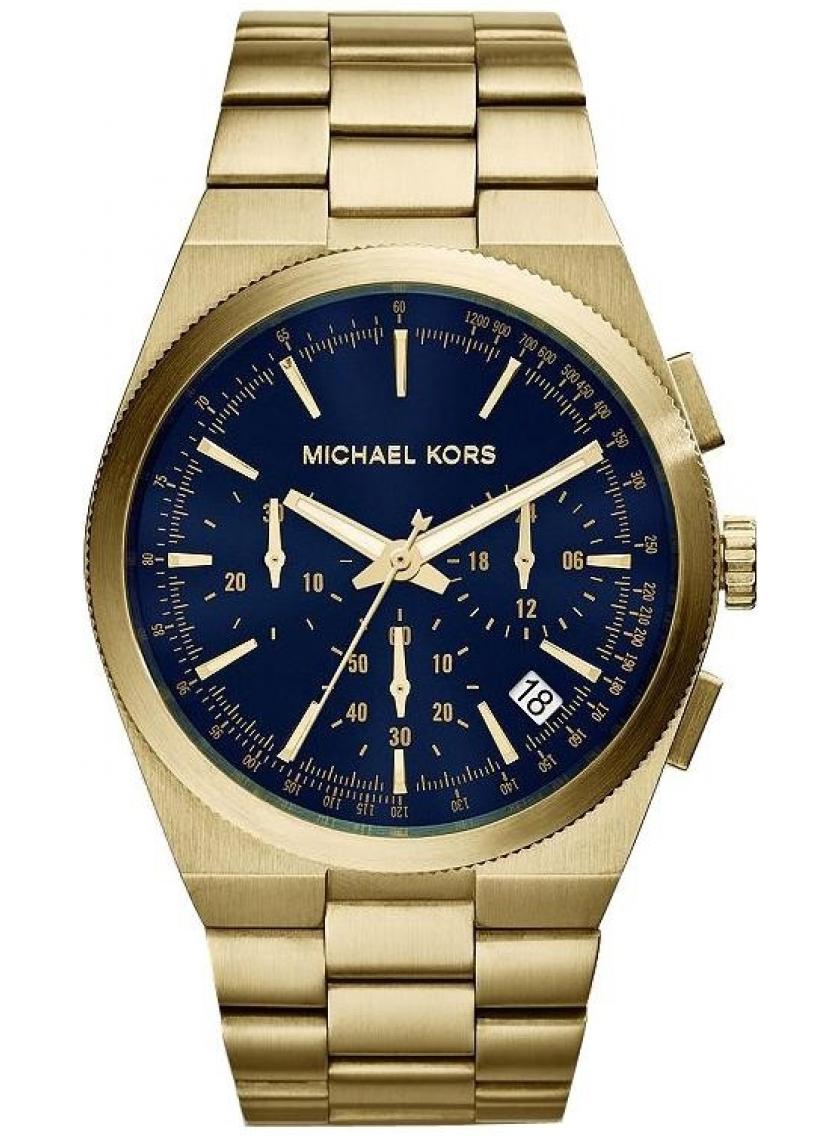

Panske Hodinky Michael Kors Lacne - Smart Hodinky Michael Kors Gen 5E Darci Pavé Gold-Tone Zlate | Michael Kors Slovakia

Dámske hodinky | Michael Kors MK3498 | Znackovyvypredaj.sk - Luxusné hodinky a kabelky so zľavou až 72%

/michael-kors-hodinky-runway-mk8907-tmavomodra.jpg)

/michael-kors-hodinky-runway-mk4390-modra.jpg)

/michael-kors-hodinky-mk9091-tmavomodra-4064092214284.jpg)

/michael-kors-hodinky-mk9091-tmavomodra-4064092214284.jpg)

/michael-kors-hodinky-pyper-mk2739-modra.jpg)